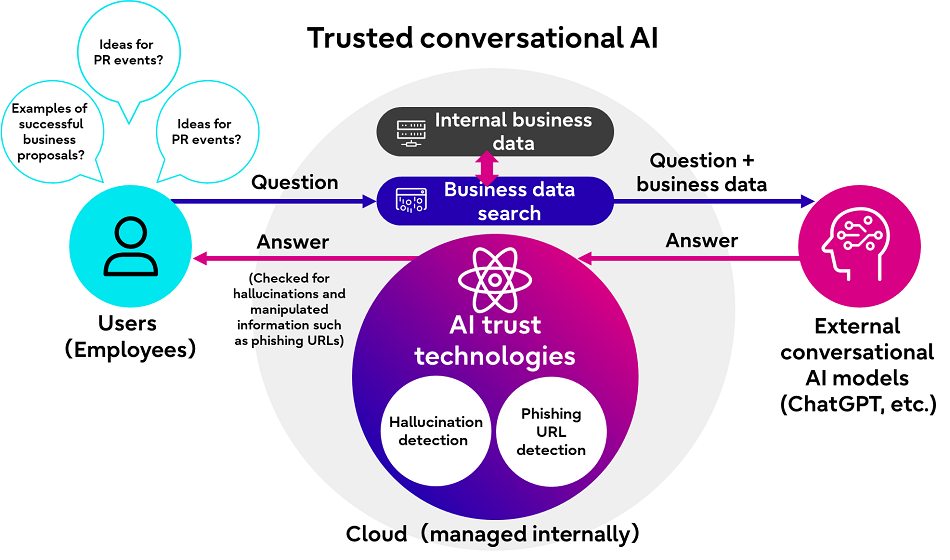

Fujitsu has announced the launch of two new AI trust technologies to improve the reliability of the responses from conversational AI models.

The newly developed technologies include a technique to detect hallucinations in conversational AI models – a phenomenon in which generative AI creates incorrect or unrelated output – and a technique jointly developed at its small research lab (1) at Ben Gurion University to detect phishing site URLs implanted in the responses of the AI through poisoning attacks that inject false information.

With the new technologies, Fujitsu aims to provide corporate and individual users a tool to evaluate the reliability of replies from conversational AI, ultimately contributing to a more secure use of AI across a range of use cases, including for businesses aiming to implement the technology in actual operations.

Professor Yuval Elovici, Ben Gurion University, comments: “Generative AI stands as a critical domain, and within it, the hallucination detection technology Fujitsu has developed emerges as pivotal for establishing trustworthy conversational AI systems. Researchers from Ben-Gurion University (BGU) and Fujitsu have pioneered an innovative technique to enhance the security of AI-based URL filtering against adversarial threats. Our breakthrough focuses on tabular data, resulting in a more resilient defense mechanism against adversarial attacks in the realm of AI-driven URL filtering. Moving ahead, Fujitsu and Ben-Gurion University are set to collaborate on forging novel security-centric advancements within the realm of Generative AI.”

Fujitsu will include these new technologies in its conversational AI core engine provided through the “Fujitsu Kozuchi (code name) – Fujitsu AI Platform,” which offers users access to a wide range of powerful AI and ML technologies.

The technology to detect hallucinations in conversational AI will be available to users in Japan starting September 28, 2023, and the technology to detect phishing site URLs in responses of conversational AI starting October 2023.

The new technologies will be both available to corporate users as a demo environment via Kozuchi and to individual users via a dedicated portal site (2).

Fujitsu says it plans a roll-out of both technologies to the global market in the future.

Newly developed technologies

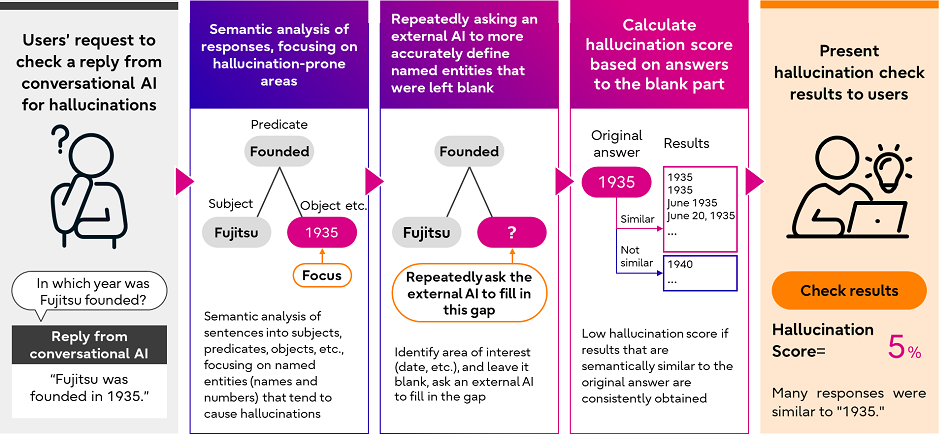

1. Technology for highly accurate detection of hallucination in responses of conversational AI

When applying conversational AI in business operations, businesses often use the technology to extract information related to questions from pre-registered business data and add the data as reference information when asking questions to an external conversational AI. While this method provides accurate replies and reduces hallucinations, complete prevention of hallucinations represents an ongoing issue as conversational AI in some cases is unable to correctly extract information related to questions and accordingly creates unrelated, incorrect replies. Although methods to estimate the degree to which the reply of an AI might be a hallucination (hallucination score), accurate estimation of this score remains a difficult task as conversational AI uses various different phrases to express the same fact.

Based on the observation that conversational AI frequently generates incorrect information for proper nouns and numbers, and contents of replies tend to differ with repeated questions, Fujitsu has developed a technology to identify and focus on parts of sentences where hallucinations are likely to occur.

To calculate a highly accurate hallucination score, the new technology first breaks down the AI’s reply into three parts (subject, predicate, object, etc.) and then automatically identifies named entities within the reply. As a next step, the technology leaves these named entities blank and repeatedly asks the external AI to more accurately define these specific expressions. (Figure 2)

Fujitsu benchmarked this technology using open data, including the WikiBio GPT-3 Hallucination Dataset (3) and found that it could improve the accuracy of detection (AUC-ROC) (4) by approximately 22% compared to other state-of-the-art methods for detecting AI hallucinations, such as SelfCheckGPT (5).

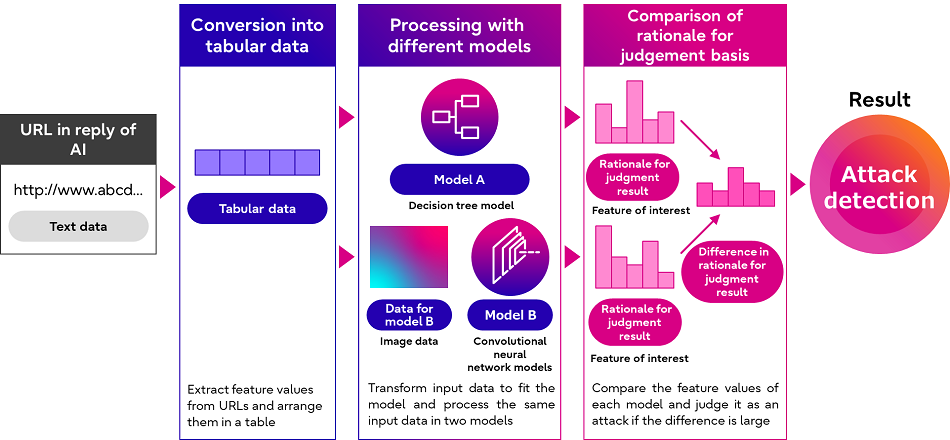

2. Technology for detection of phishing URLs in responses of conversational AI

As conversational AI creates responses based on its training data, hostile entities can trick the AI into creating responses that include manipulated information such as phishing URLs that lead to fake websites by implanting malicious information in the AI training data.

To address this issue, Fujitsu has developed a technology to detect manipulated URLs in the responses of conversational AI. Once the technology identifies a phishing URL, it issues a warning message to users.

Fujitsu’s new technology not only detects phishing URLs, but also increases the AI’s resistance against existing attacks tricking AI models into making a deliberate misjudgment to ensure highly reliable responses by the AI. The newly developed technology leverages a technique jointly developed by Fujitsu and Ben-Gurion University of the Negev at the Fujitsu Small Research Lab established at Ben-Gurion University. The technology leverages the tendency that hostile entities often attack a single type of AI model, and detects malicious data by processing information with various different AI models and evaluating the difference in rationale for the judgment result.

The technology can not only be used for the detection of phishing URLs, but also to prevent general attacks to deceive AI models that use tabular data, and can thus also be used to avoid attacks on other services.